What are we modeling? Using predictive fit to inform effect metric choice in meta-analysis

2025-10-09

Research Synthesis

The systematic integration of empirical results across multiple sources of evidence, for purposes of drawing generalizations1.

Meta-Analysis

Statistical models and methods to support quantitative research synthesis.

Fields that rely on research synthesis

- Medicine (Cochrane Collaboration)

- Education (What Works Clearinghouse)

- Psychology

- Social policy (justice, welfare, public health, etc.)

- Economics, international development

- Ecology and Environmental Science

- Physical sciences

Some background on meta-analysis

The problem of effect metric choice

Proposal: Use predictive fit criteria to inform metric choice

Illustrations

Discussion

Canonical Meta-Analysis

We observe summary results from each of \(k\) studies:

\(T_i\) - effect size estimate

\(se_i\) - standard error of effect size estimate

\(N_i\), \(\mathbf{x}_i\) - sample size, other study features

- A summary random effects model: \[ \begin{aligned} T_i &\sim N\left(\theta_i, \ se_i^2 \right) \\ \theta_i &\sim N\left(\mu, \ \tau^2\right) \end{aligned} \]

- A random effects meta-regression: \[ \begin{aligned} T_i &\sim N\left(\theta_i, \ se_i^2 \right) \\ \theta_i &\sim N\left(\mathbf{x}_i \boldsymbol\beta,\ \tau^2\right) \end{aligned} \]

- “Conceptual unity of statistical methods” for meta-analysis2 suggests that most any effect size measure \(\theta_i\) can be used, as long as \(T_i \dot{\sim} N\left(\theta_i, \ se_i^2 \right)\).

Prediction Interval

Effect Metric Menagerie

Effect Metric Families

Single-group summaries

- Raw proportions \(\pi\)

- Arcsine-transformation \(a = \text{asin}\left(\sqrt{\pi}\right)\)

- Raw means \(\mu\)

Bivariate associations / psychometric

- Pearson’s correlation \(\rho\)

- Fisher’s \(z\)-transformation \(\zeta = \text{atanh}(\rho)\)

- Cronbach’s \(\alpha\) coefficients (or transformations thereof)

Group comparison of binary outcomes

- Risk differences \(\pi_1 - \pi_0\)

- Risk ratios (log-transformed) \(\log\left(\frac{\pi_1}{\pi_0}\right)\)

- Odds ratios (log-transformed) \(\log\left(\frac{\pi_1 / (1 - \pi_1)}{\pi_0 / (1 - \pi_0)}\right)\)

- Bivariate models for \(\pi_0, \pi_1\)

Group comparison of continuous outcomes

- Raw mean differences \(\mu_1 - \mu_0\)

- Standardized mean differences \(\delta = \frac{\mu_1 - \mu_0}{\sigma}\)

- Response ratios (log-transformed) \(\lambda = \log\left(\frac{\mu_1}{\mu_0}\right)\)

- Probability of superiority

Metric choice methodology

Large literature on effect metrics for group comparison on binary outcomes.

Effect Metric Choice

Choice of metric is constrained by

Studies designs

Data availability, reporting conventions

Heterogeneity of study features (e.g., outcome scales)

Metric choice is driven by disciplinary conventions.

- In many applications, more than one metric could apply.

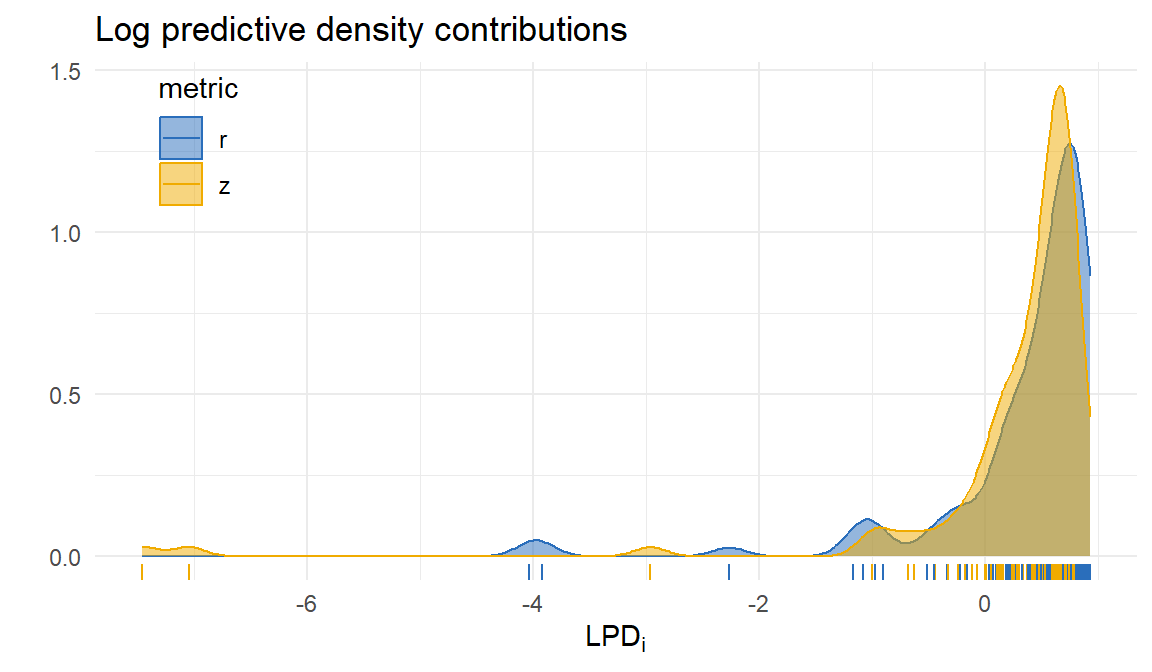

Metric choice by predictive fit criteria

Evaluate effect metrics by performance in predicting summary data for a new study.

- Data vector \(\mathbf{d}_i\) consisting of summary statistics used to compute effect size estimates.

- Use leave-one-out log-predictive density to measure predictive performance. \[ LPD = \frac{1}{k} \sum_{i=1}^{k} \log p\left(\mathbf{d}_i \left| \hat\mu_{(-i)}, \hat\tau_{(-i)}, \mathbf{X}_i, N_i\right.\right) \]

Two challenges

Polishing up models to generate predictions.

Conventional meta-analysis focuses on one-dimensional \(f(\mathbf{d}_i)\), so we need auxiliary models for the rest of the data.

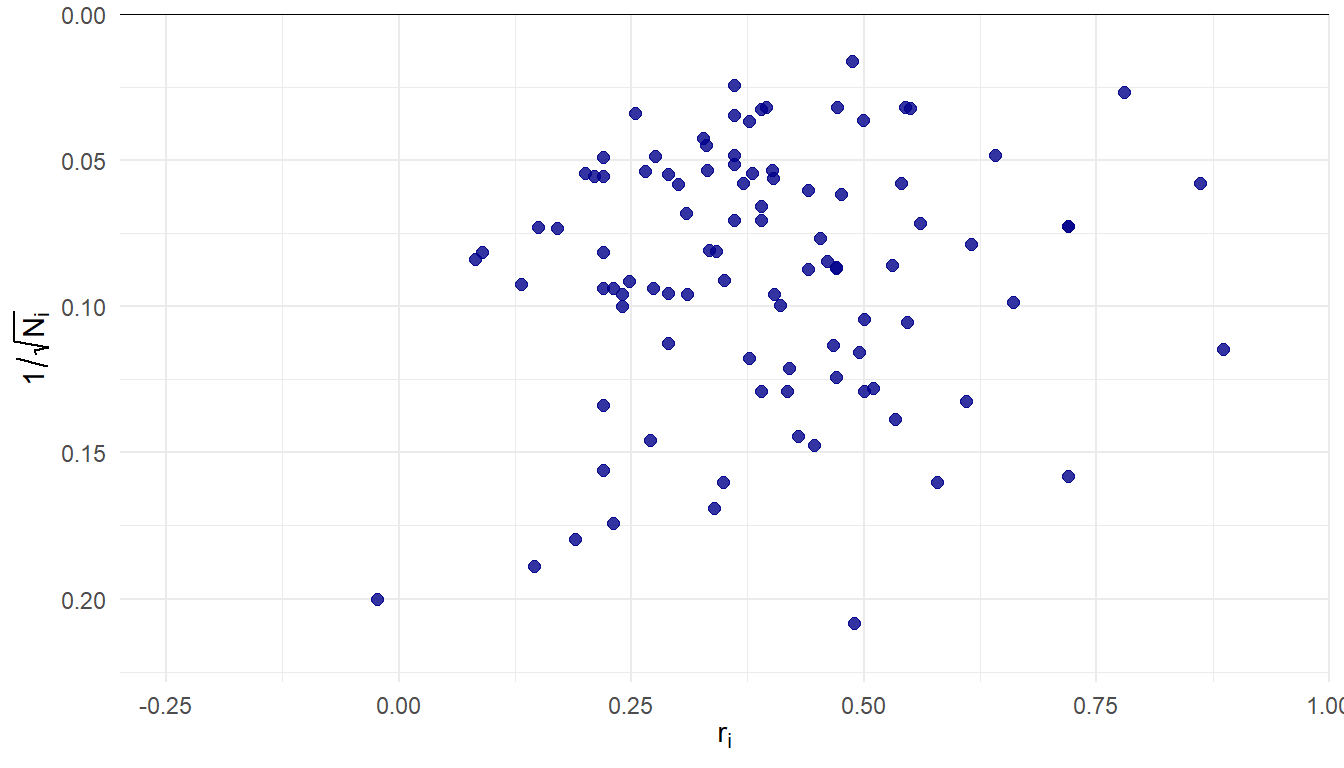

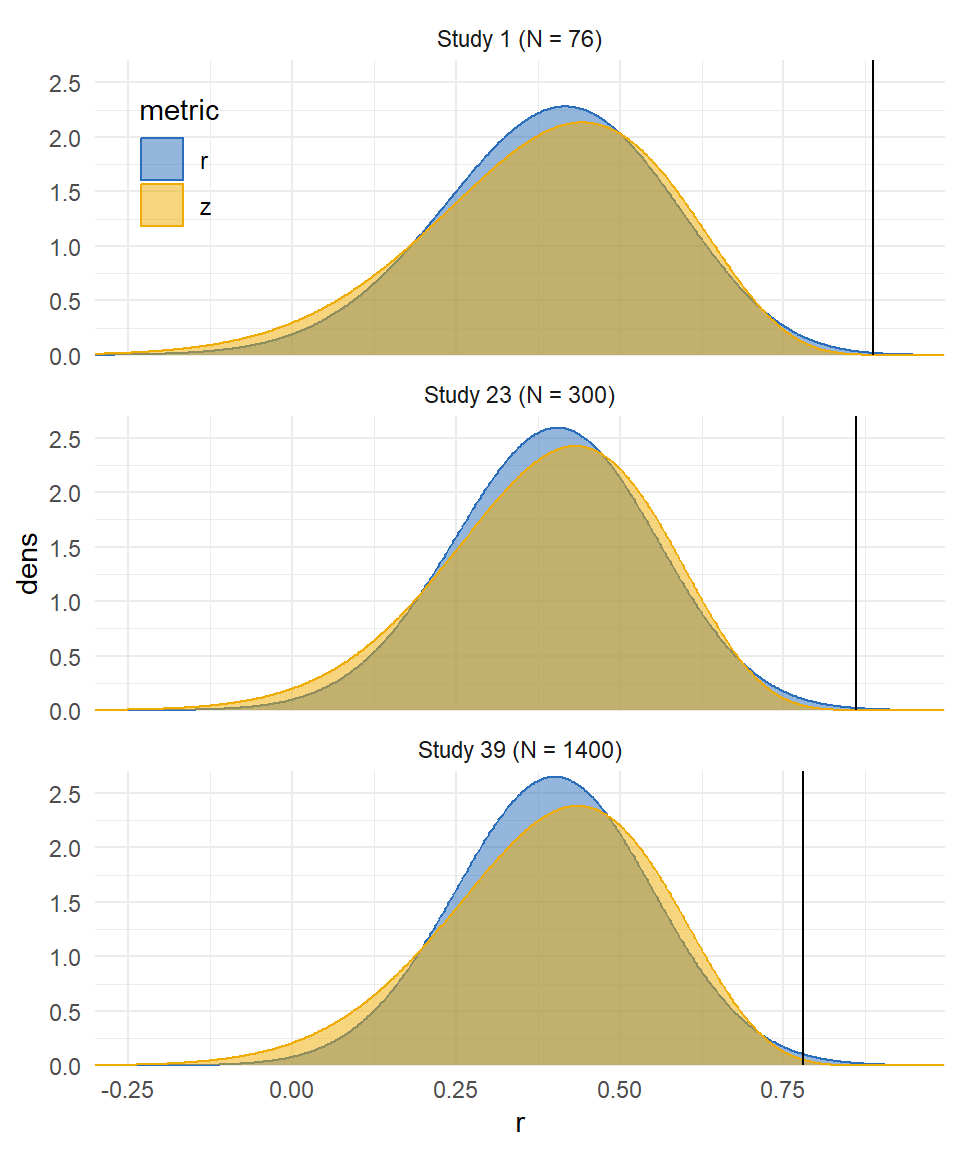

Class attendance and college grades

Credé and colleagues19 reported a systematic review and meta-analysis of studies on association between class attendance and grades / GPA in college.

99 correlation estimates, samples ranging from \(N_i\) = 23 to 3900 (median = 151, IQR = 76-335).

Bivariate associations

- The data: Pearson correlation between two variables of interest from a sample of \(N_i\) observations, \(r_i\).

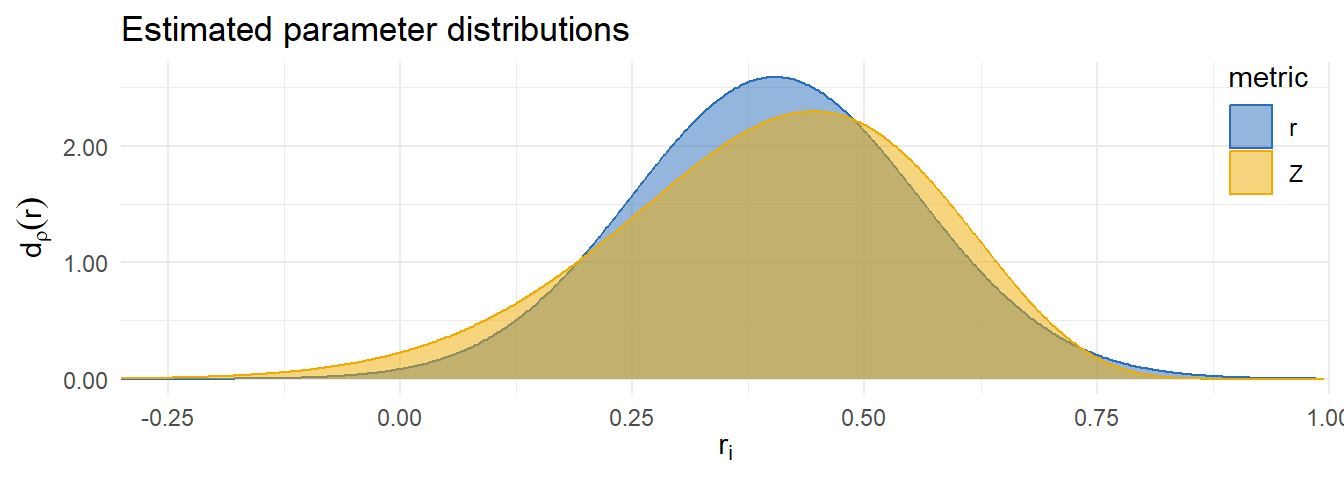

\(\rho\) metric

Effect size estimate \(r_i\), standard error \(\displaystyle{se_i = \frac{1 - r_i^2}{\sqrt{N_i}}}\)

Predictive model: \[ \begin{aligned} r_i &\dot{\sim} \ N\left(\rho_i, \ \frac{(1 - \rho_i^2)^2}{N_i}\right) \\ \rho_i &\sim \ N_{trunc}\left(\mu_\rho, \ \tau_\rho^2\right) \end{aligned} \]

\(\zeta = \text{atanh}(\rho)\) metric

Effect size estimate \(z_i = \text{atanh}(r_i)\), standard error \(\displaystyle{se_i = \frac{1}{\sqrt{N_i - 3}}}\)

Predictive model: \[ \begin{aligned} z_i &\dot{\sim} \ N\left(\zeta_i, \ \frac{1}{N_i - 3}\right) \\ \zeta_i &\sim \ N\left(\mu_\zeta, \ \tau_\zeta^2\right) \end{aligned} \]

log-predictive density: \[\begin{eqnarray} \log &d_r&(r_i | \hat\mu_{\zeta (-i)}, \hat\tau_{\zeta (-i)}, N_i) \\ &=& \log d_z\left(z_i \left| \hat\mu_{\zeta (-i)}, \hat\tau_{\zeta (-i)}, N_i \right.\right) - \log\left(1 - r_i^2\right) \end{eqnarray}\]

Metric comparison

| Metric | Est. | 95% CI | 80% PI | LPD | SE |

|---|---|---|---|---|---|

| r | 0.40 | 0.37-0.44 | 0.20-0.60 | 0.34 | 0.09 |

| z | 0.41 | 0.37-0.45 | 0.16-0.61 | 0.22 | 0.12 |

| Difference | 0.12 | 0.05 |

Outliers

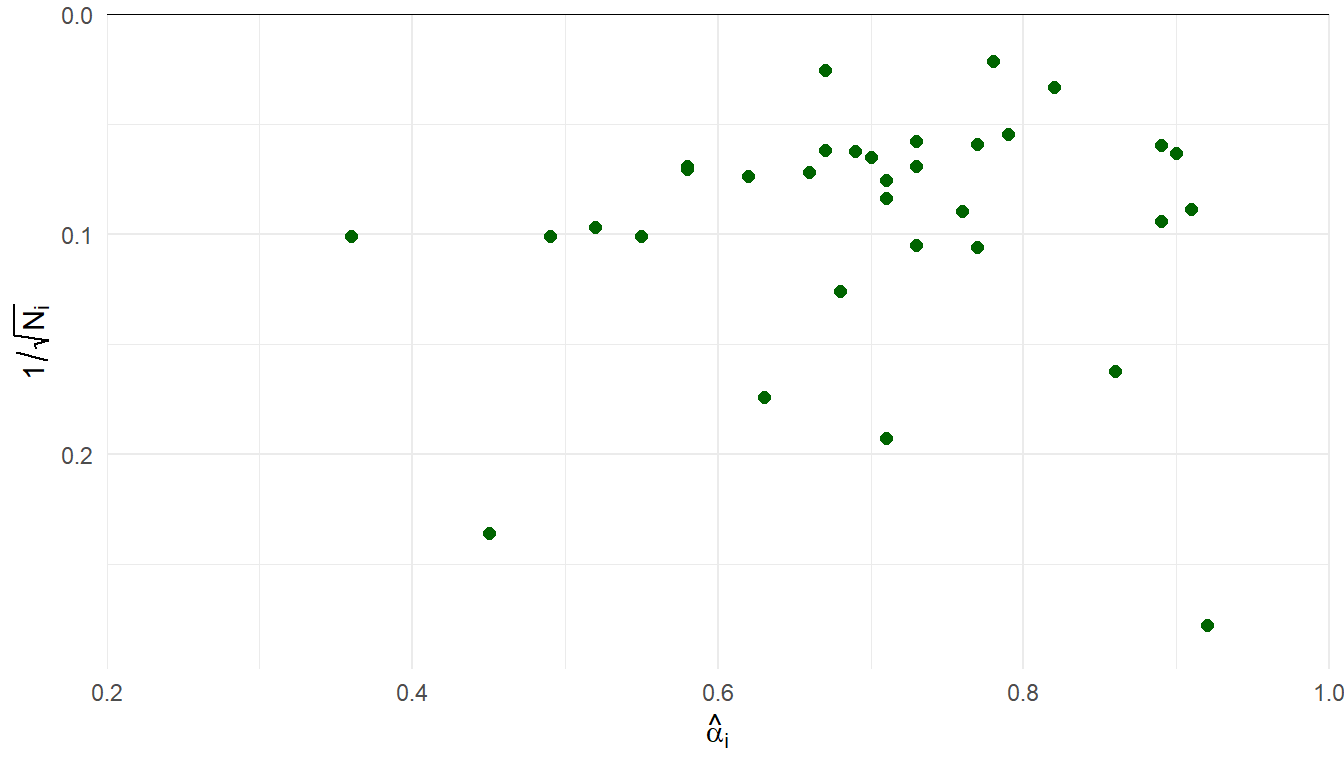

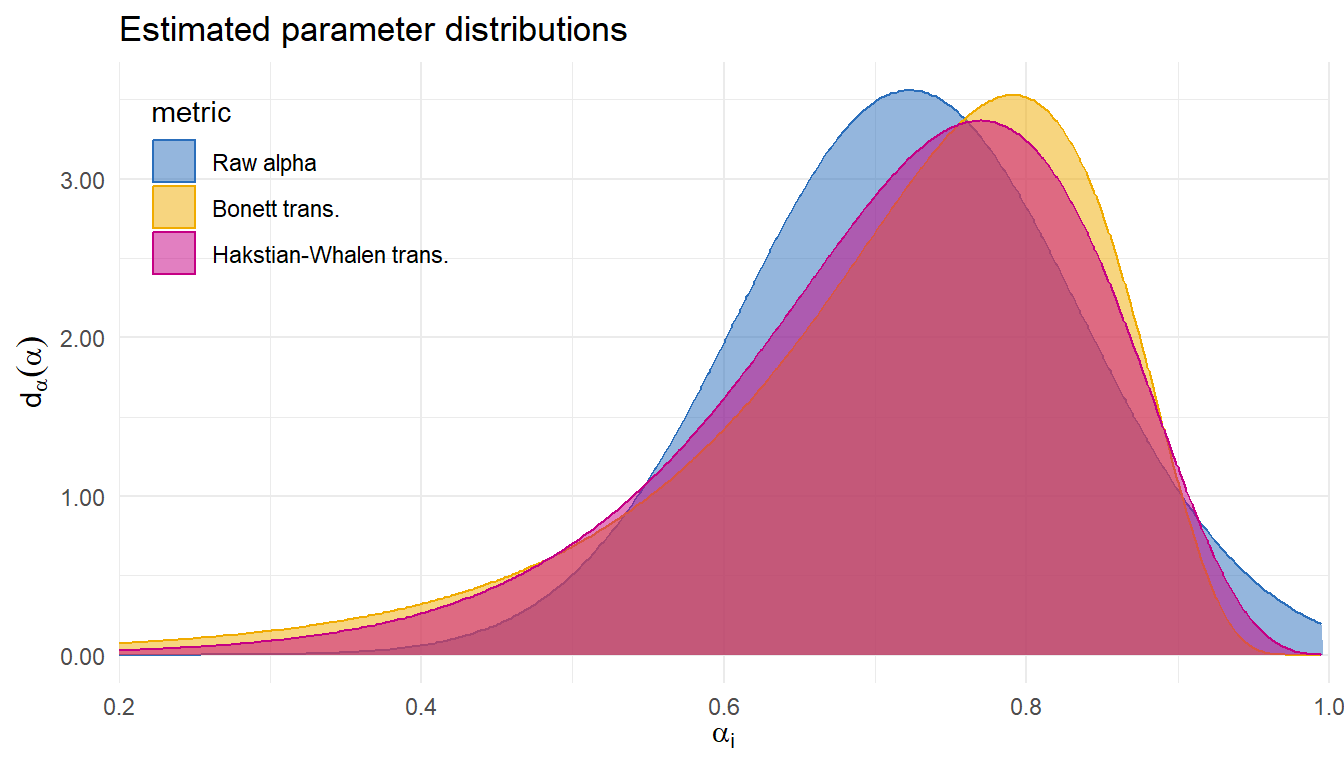

Reliability generalization of MIBS

Demir and colleagues20 gathered 33 estimates of internal consistency (Cronbach \(\alpha\)) of the Mother-to-Infant Bonding Scale.

Sample sizes ranging from \(N_i\) = 13 to 2251 (median = 177, IQR = 98-260).

| Metric | Est. | 95% CI | 80% PI | LPD | SE |

|---|---|---|---|---|---|

| Raw alpha | 0.72 | 0.68-0.76 | 0.58-0.87 | 0.57 | 0.16 |

| Bonett trans. | 0.74 | 0.69-0.78 | 0.51-0.86 | 0.53 | 0.12 |

| Hakstian-Whalen trans. | 0.73 | 0.68-0.77 | 0.53-0.86 | 0.58 | 0.11 |

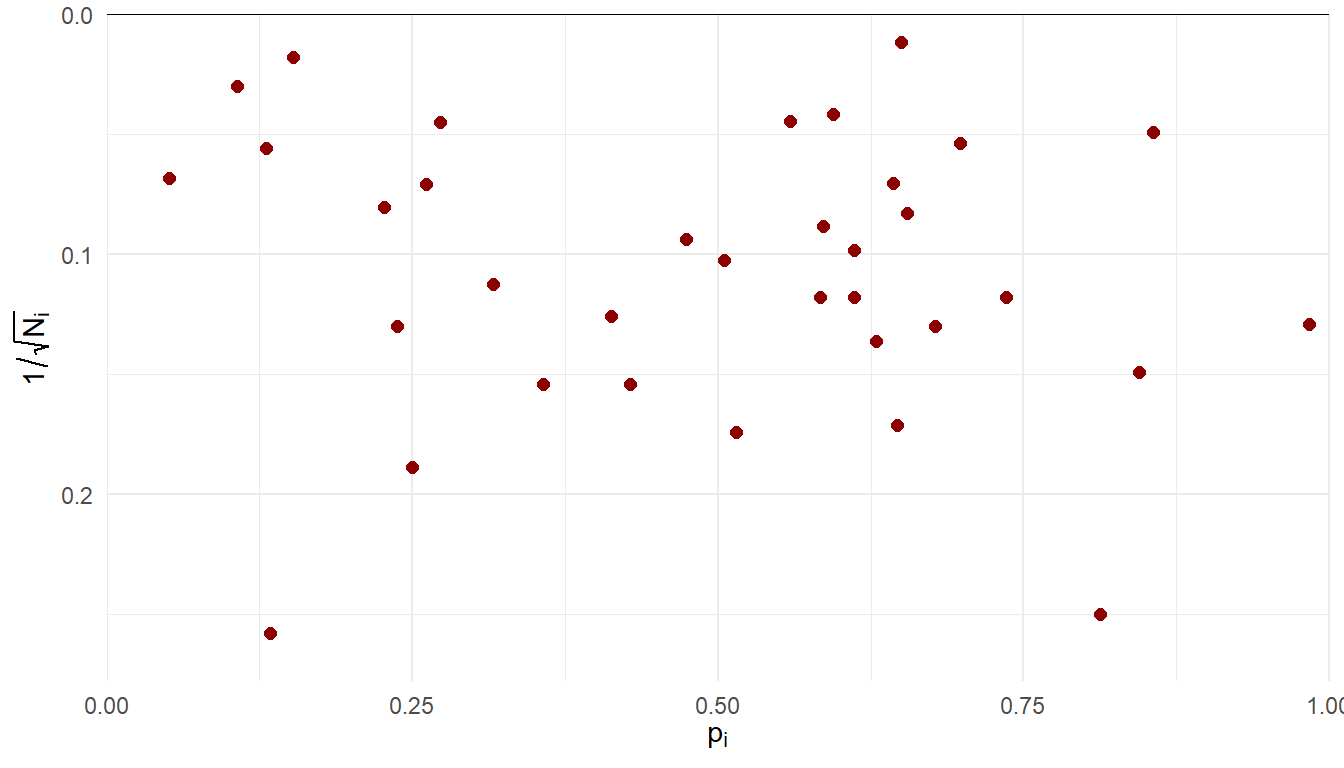

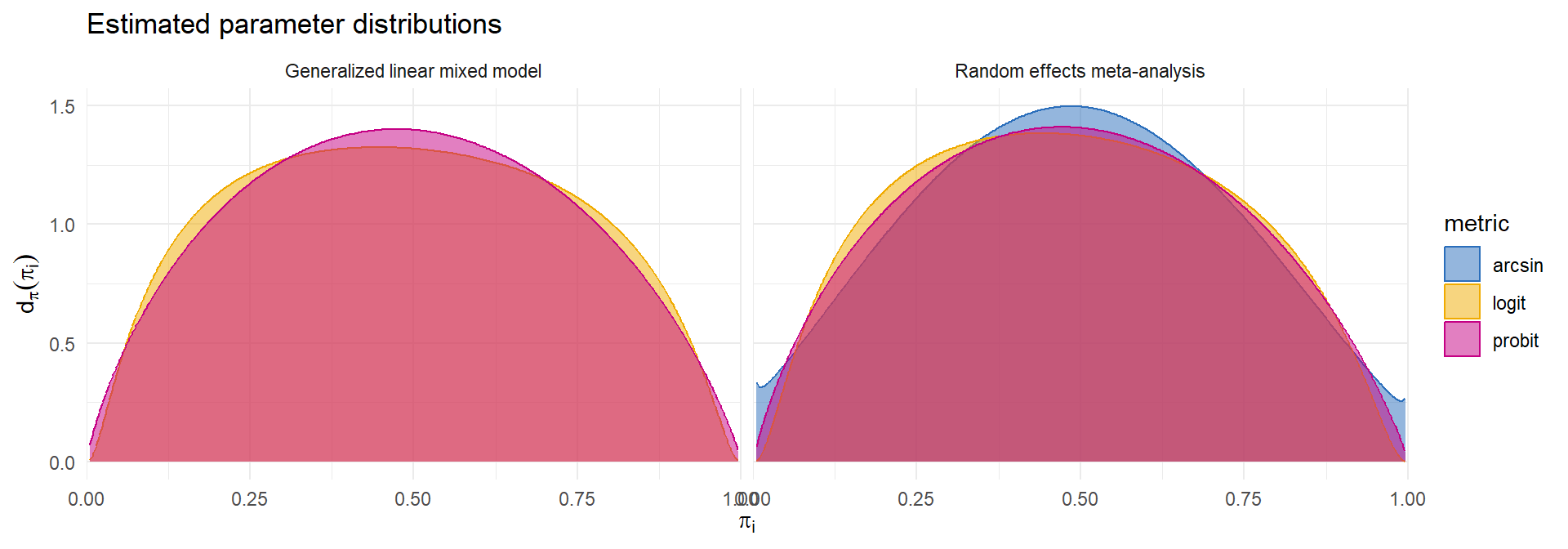

Incidence of olfactory loss in COVID-19 patients

Hannum and colleagues21 compiled data on rates of olfactory loss across 35 studies of COVID-19 patients.

Sample sizes ranging from \(N_i\) = 15 to 7178 (median = 95, IQR = 56.5 - 267.5).

Many different transformations of \(p_i\) are used as effect size measures (identity, logit, probit, arcsin-square-root, Freeman-Tukey).

Could use conventional random effects model or generalized linear mixed model.

- Which predictive model to use?

\[ \begin{aligned} g(p_i) &\dot{\sim} \ N\left(g(\pi_i), \ \frac{h(\pi_i)}{N_i}\right) \qquad & N_i p_i &\sim \ Binom\left(N_i, \ \pi_i\right)\\ g(\pi_i) &\sim \ N\left(\mu_g, \ \tau_g^2\right) \qquad & g(\pi_i) &\sim \ N\left(\mu_g, \ \tau_g^2\right) \end{aligned} \]

Incidence of olfactory loss in COVID-19 patients

| Normal | Binomial | |||||||

|---|---|---|---|---|---|---|---|---|

| Model | Metric | Est. | 95% CI | 80% PI | LPD | SE | LPD | SE |

| RE | logit | 0.48 | 0.38-0.58 | 0.17-0.81 | -5.10 | 0.36 | -5.11 | 0.36 |

| RE | probit | 0.49 | 0.39-0.58 | 0.17-0.81 | -5.18 | 0.40 | -5.18 | 0.40 |

| RE | arcsin | 0.49 | 0.40-0.58 | 0.17-0.81 | -4.96 | 0.32 | -4.96 | 0.32 |

| GLMM | logit | 0.48 | 0.38-0.59 | 0.16-0.82 | -5.43 | 0.55 | ||

| GLMM | probit | 0.49 | 0.39-0.58 | 0.17-0.82 | -5.24 | 0.43 | ||

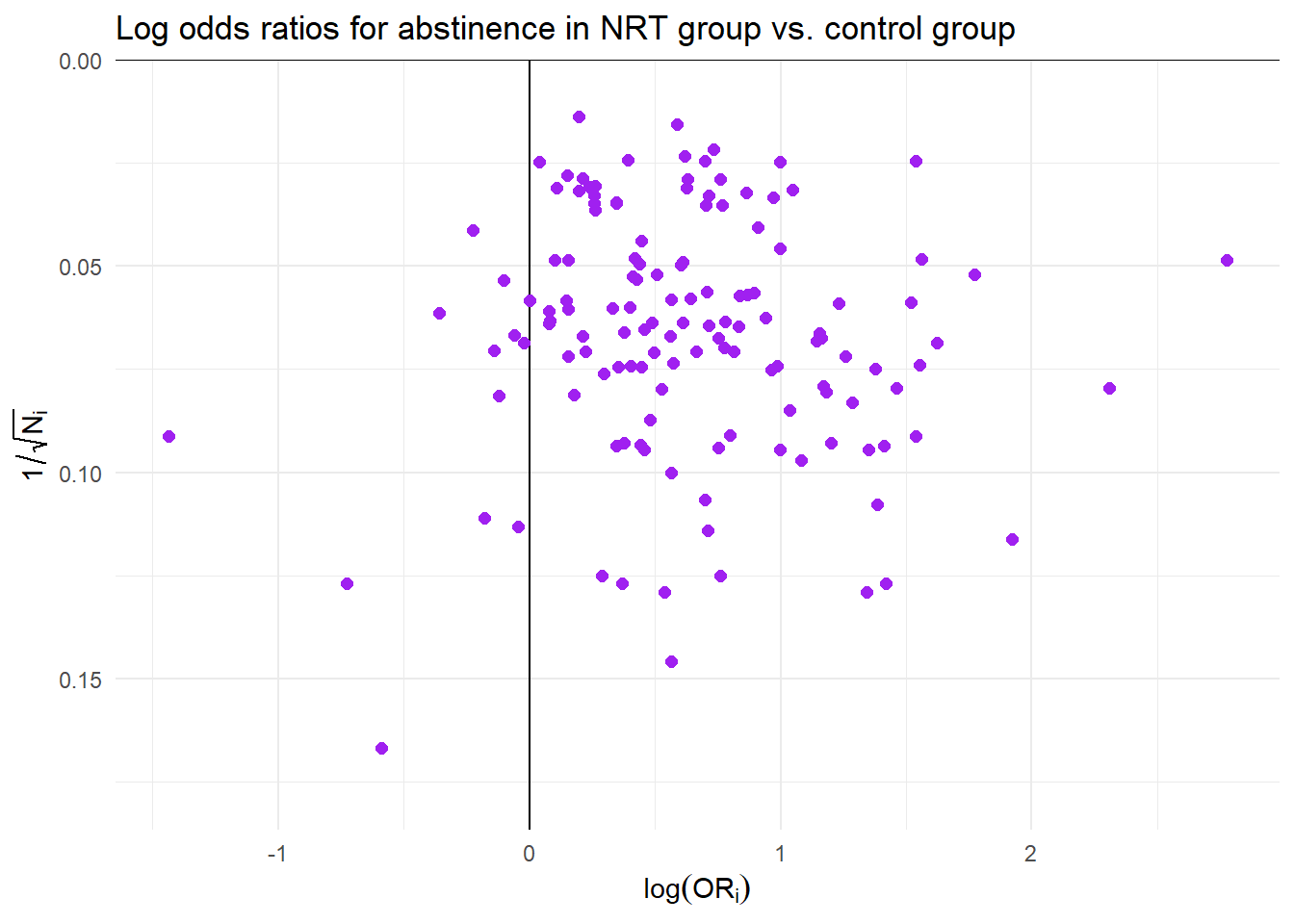

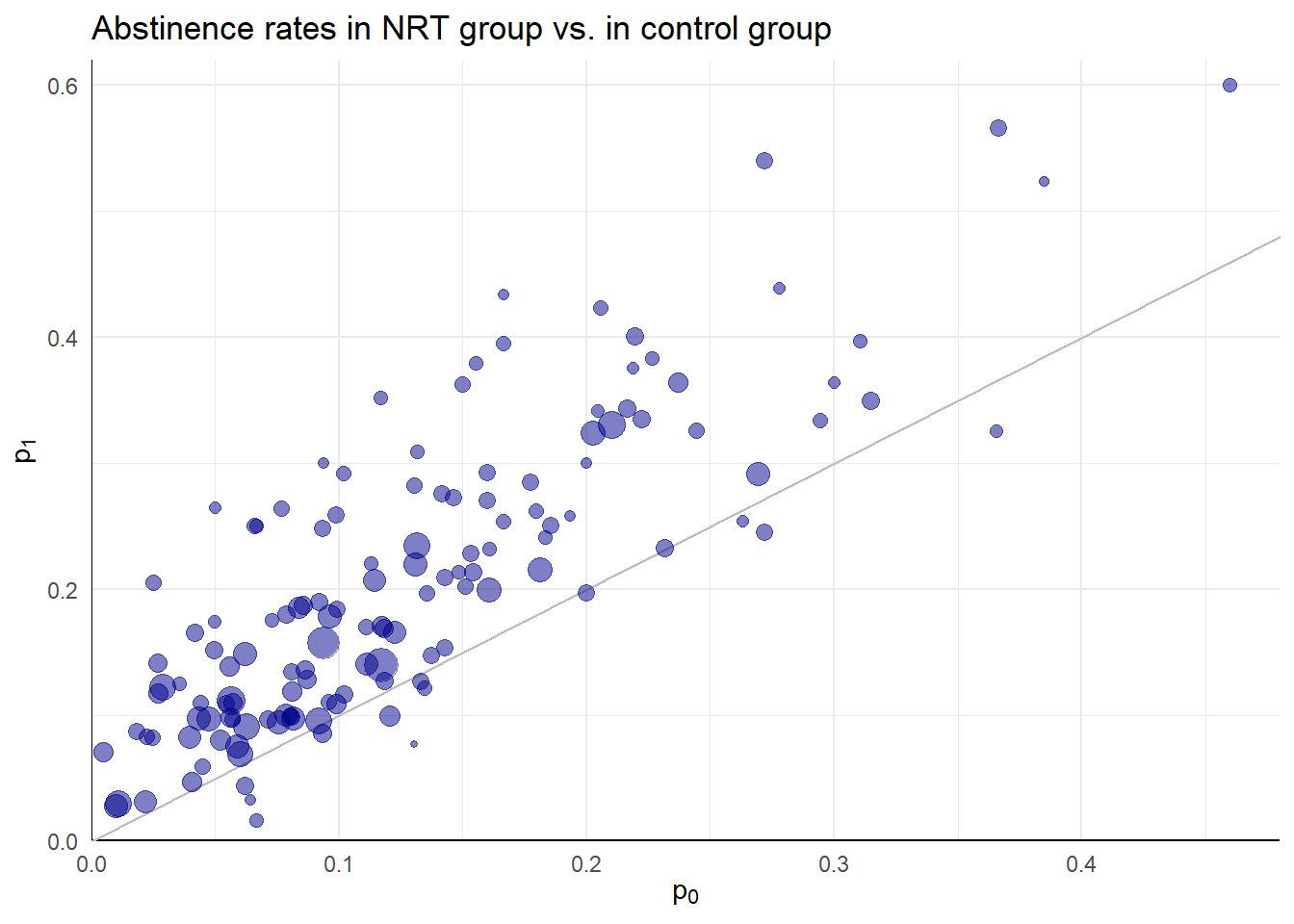

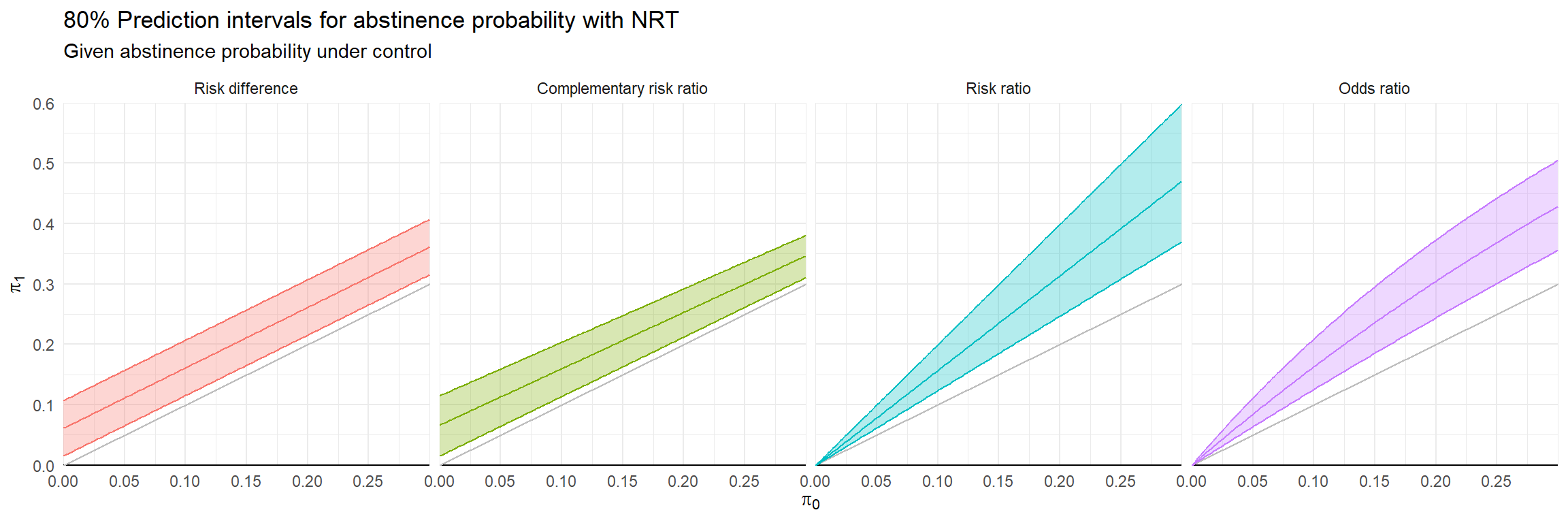

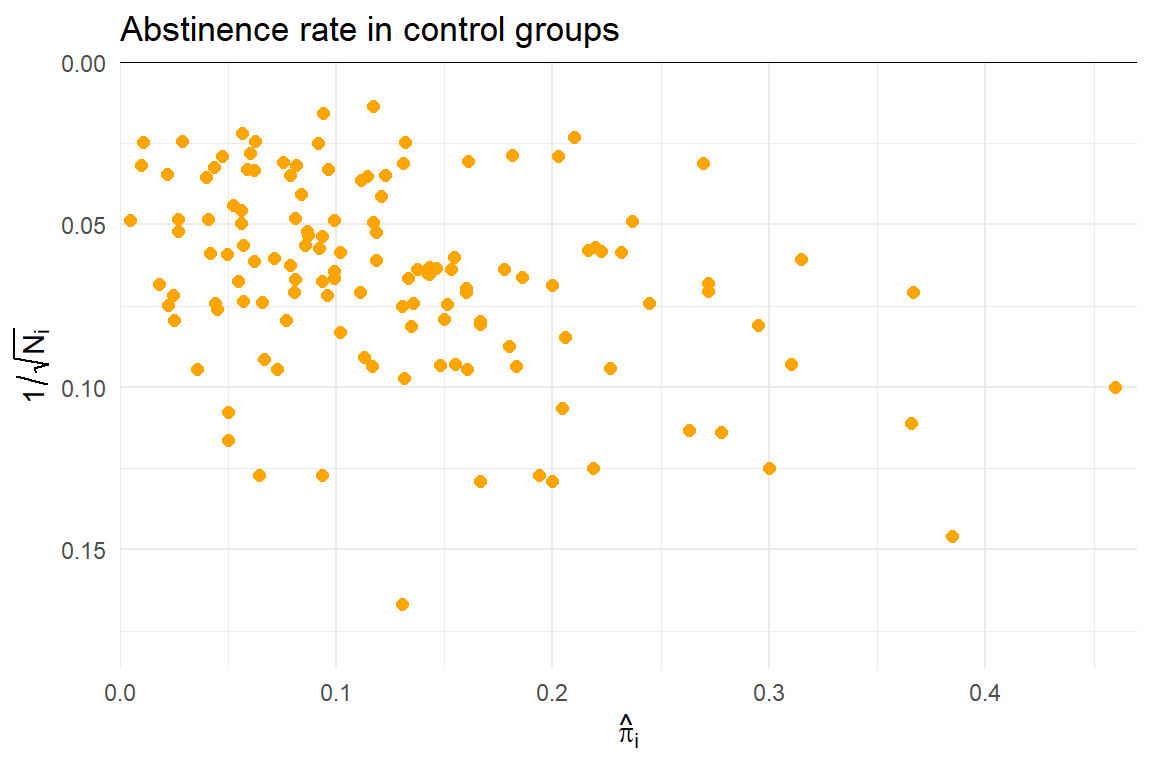

Effectiveness of nicotine replacement therapy

Cochrane Systematic Review of effects of nicotine replacement therapy vs. control on smoking cessation, defined as abstinence at 6+ month follow-up22.

Sample sizes ranging from \(N_i\) = 36 to 5290 (median = 240.5, IQR = 153.5 - 428.5).

Random effects meta-analysis

- Difference ES metrics suggest very different implications and different heterogeneity

| Metric | Est | 95% CI | 80% PI | I2 |

|---|---|---|---|---|

| Risk difference | 0.06 | 0.05-0.07 | 0.02-0.11 | 63.50 |

| Complementary risk ratio | 1.07 | 1.06-1.08 | 1.02-1.13 | 65.51 |

| Risk ratio | 1.57 | 1.48-1.66 | 1.23-1.99 | 36.88 |

| Odds ratio | 1.75 | 1.63-1.88 | 1.29-2.38 | 39.06 |

Effect metric comparison

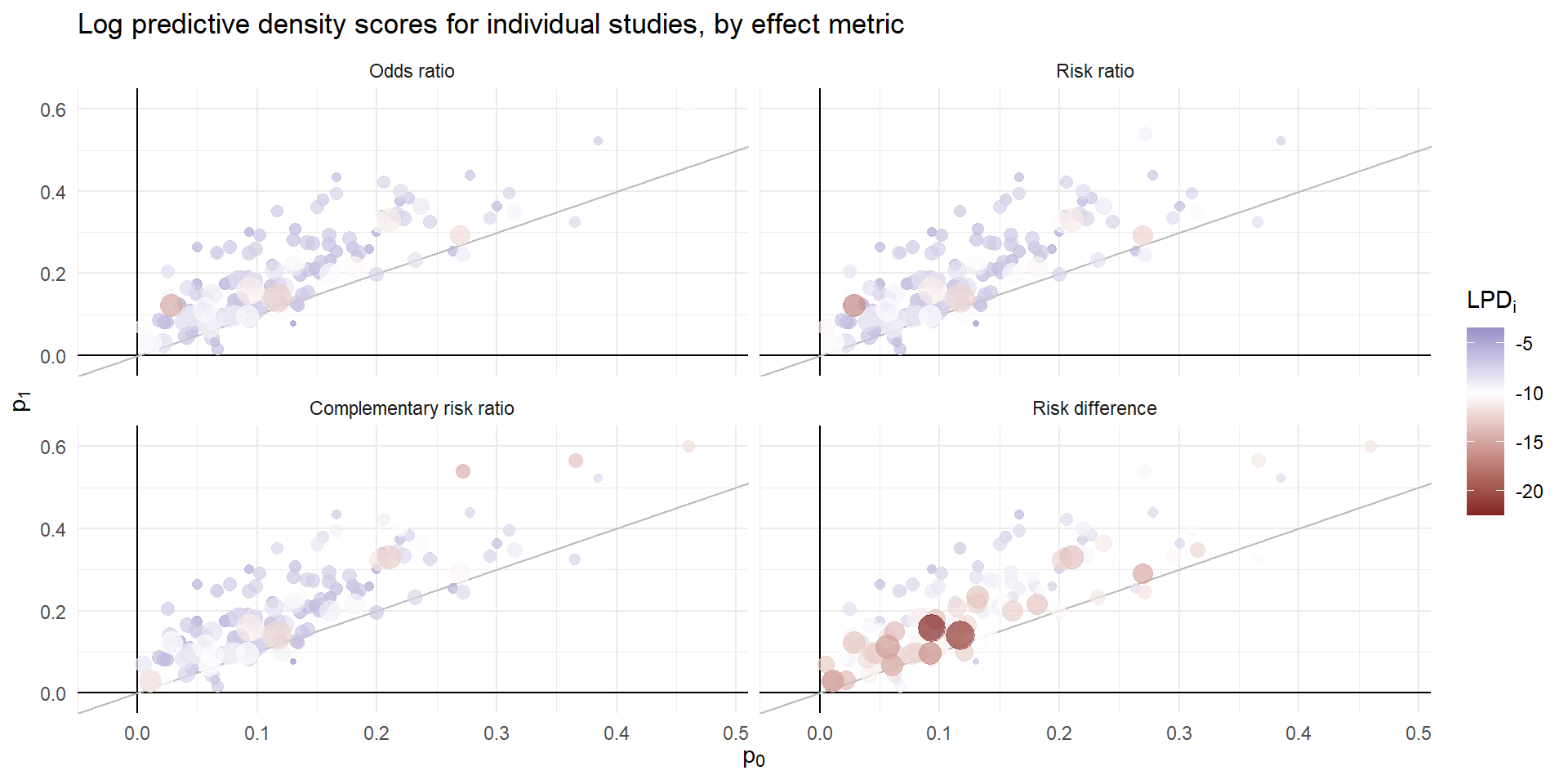

Goal: evaluate predictions of \(\hat\pi_{0i}\), \(\hat\pi_{1i}\) using log-predictive density.

Conventional RE meta-analysis is a model for \(f(\hat\pi_{0i}, \hat\pi_{1i})\).

Possible auxiliary models for \(\hat\pi_{0i}\) or \(\hat\pi_{1i}\):

Random effects meta-analysis/meta-regression

Generalized linear mixed model

Beta-binomial regression

Predictive model

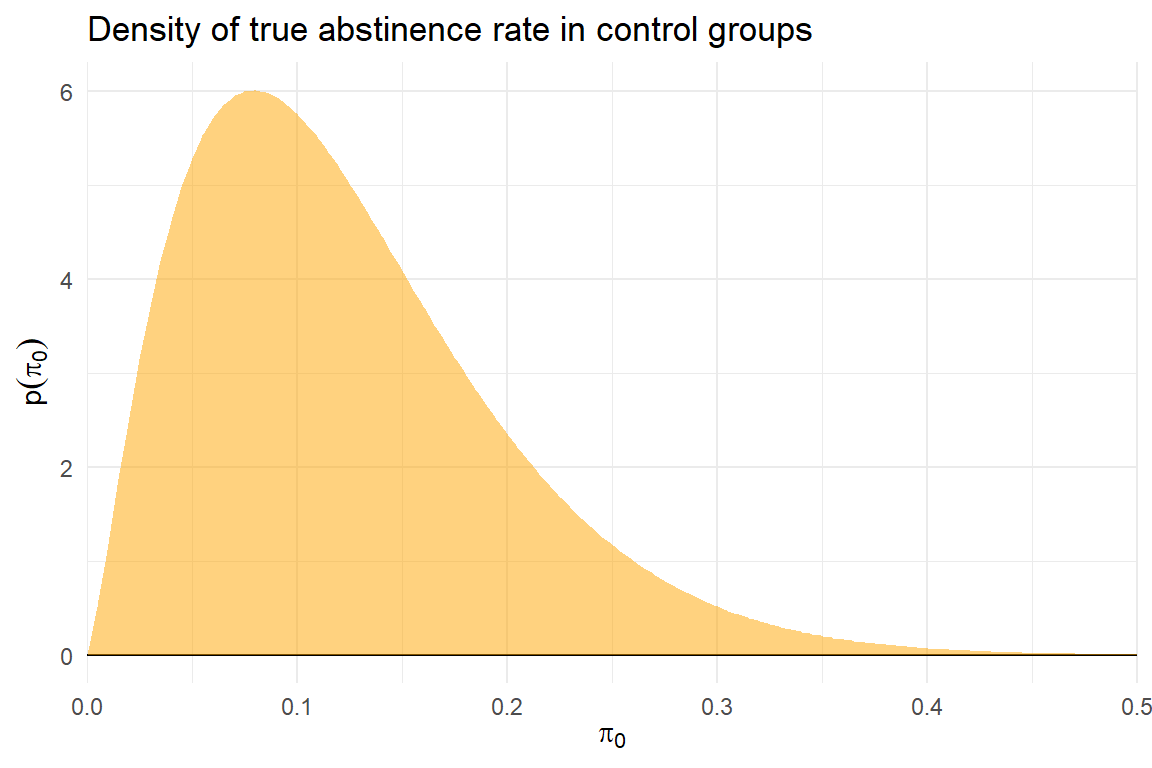

\[ \begin{aligned} &\text{Auxiliary model:} \quad & \pi_{0i} &\sim Beta\left(\alpha, \ \beta\right) \\ &\text{RE meta-analysis model:} \quad & \theta_i &\sim N\left(\mu, \ \tau^2\right) \\ &\text{Observation model:} \quad & N_{0i} \hat\pi_{0i} &\sim Binom\left( N_{0i}, \ \pi_{0i} \right) \\ & & N_{1i} \hat\pi_{1i} &\sim Binom\left( N_{0i}, \ g(\pi_{0i}, \theta) \right) \end{aligned} \]

Metric comparison

| Metric | LPD | SE | Diff. vs. OR | SE |

|---|---|---|---|---|

| Odds ratio | -7.300 | 0.151 | ||

| Risk ratio | -7.342 | 0.157 | -0.041 | 0.019 |

| Complementary risk ratio | -7.443 | 0.163 | -0.143 | 0.076 |

| Risk difference | -10.152 | 0.217 | -2.852 | 0.135 |

Predictive discrepancies

Discussion

Effect metric choice is a modeling assumption.

Predictive fit assessment is relevant and useful for meta-analysis.

Log predictive density calculations should be part of meta-analysts’ toolkit.

Will often require use of auxiliary models.

Advantages of log predictive density scoring

Allows comparison across effect metrics and different forms of models.

Auxiliary model building exercise can clarify scientific context.

Disadvantages and open questions

Deshpande and colleagues28 highlight discrepancies between LPD and other model evaluation metrics.

Other predictive scoring rules that may be relevant?

Is the joint distribution of \(\mathbf{d}_i\) the right focus?